Google and Samsung just launched the AI features Apple couldn’t with Siri

Google just announced that Gemini will soon be able to take care of some multi-step tasks on your phone, like ordering food or hailing a car, starting first with the Pixel 10, Pixel 10 Pro, and the just-announced Samsung Galaxy S26 phones. It all sounds a bit like features Apple announced for Siri way back at the 2024 Worldwide Developers Conference — before Apple delayed those planned features in March 2025 and which still aren’t released.

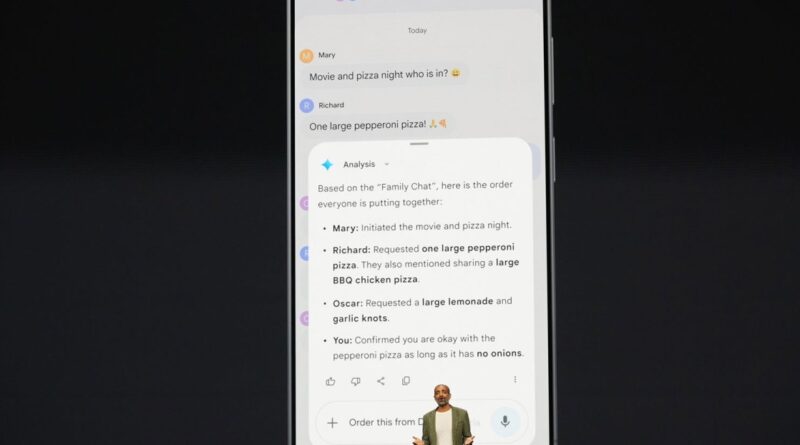

Onstage, Sameer Samat, Google’s president of Android, showed off a demo of how Gemini’s new agentic features would work to help wrangle a pizza dinner order from his busy family group chat. Samat asks Gemini to look at the chat thread and figure out what to order, and then make the order with a delivery app. Onscreen — in a prerecorded video, it wasn’t live — you can see Gemini figuring out what everyone wants from the group chat and showing that in a window. Then the user, via voice request, tells Gemini to complete that order, naming a specific pizzeria. Gemini then clicks through GrubHub to prep the order, all still onscreen. When the order is ready, Gemini sends an alert so the user can review it and actually press the submit button.

Setting aside that this situation doesn’t seem that complicated to do by yourself in the GrubHub app (or even by calling the pizzeria to talk through it with a human), this is a potentially big moment for agentic AI. Google just recently added the ability for Gemini to auto-browse for users in Chrome, and being able to do something similar right inside of Android feels like a logical next step; Google clearly wants Gemini to be thought of as a helpful agent or productivity partner rather than just a chatbot or a series of AI models.

Assuming the agentic Gemini features also launch “soon” like Google is promising and that Apple doesn’t pull a rabbit out of its hat, Google will also beat Apple to the punch on some of its most impressive Apple Intelligence demos — also only shown in prerecorded videos — from that WWDC 2024 show. One feature Apple showed off would have let Siri understand what’s on your screen and take action on it, meaning you could ask Siri to add an address from a Messages thread to the contact card of that person you’re texting with. Apple demoed how Siri would be able to take actions inside of and across apps for you. The company said Siri would even be able to understand your personal context, meaning you could ask it when your mom’s flight was landing and the assistant would pull the information from an email and show it to you.

Nearly two years later, none of that is available yet. When Apple announced the features would be delayed, the company even pulled an advertisement showing off the features. And based on reporting from Bloomberg, some of the features may not arrive until iOS 27.

There are still many questions about Gemini’s new capabilities, of course. They’ll need to actually ship. We’ll have to try them to see if they are as useful and functional as advertised — Google is calling this initial launch a “beta,” so there could be some rough edges. And we don’t know how many developers will actually let Gemini browse through their apps on behalf of users, which Verge editor-in-chief Nilay Patel likes to call the DoorDash problem. (Google says Gemini will be able to work in “select rideshare and food apps.”)

But Google seems to have leapfrogged Apple in a big way, and now Apple has even more to do to catch up.